For the past two years, most conversations about AI in customer service have started from the same premise: customers are wary of it.

You can see how that assumption shapes decisions. Teams soften tone, script empathy, and design interactions that try to feel more human. There’s a quiet belief that if AI sounds right, customers will accept it.

We wanted to test that assumption directly.

Our 2026 report, , surveyed 2,000 consumers across North America, Europe, and Asia-Pacific to understand how people actually experience AI in customer service today. Not what they say they want in theory, but what they respond to in practice.

The results point somewhere else entirely: the issue is performance. Customers are already willing to use AI, and in many cases, even prefer it to waiting for a human. What frustrates them is when it falls short.

That gap between enthusiasm and experience is where most AI strategies are getting stuck, and where agentic AI in customer experience becomes crucial.

Agentic CX in 2026: What consumers expect and most enterprises miss

There’s a common assumption that consumers are skeptical of AI in customer service. The data says otherwise. Our 2026 report surveyed 2,000 consumers to understand how people actually experience AI in customer service today.

Read reportThree numbers that define the moment

Some research takes time to interpret. We surfaced three numbers early, and together they tell a complete story:

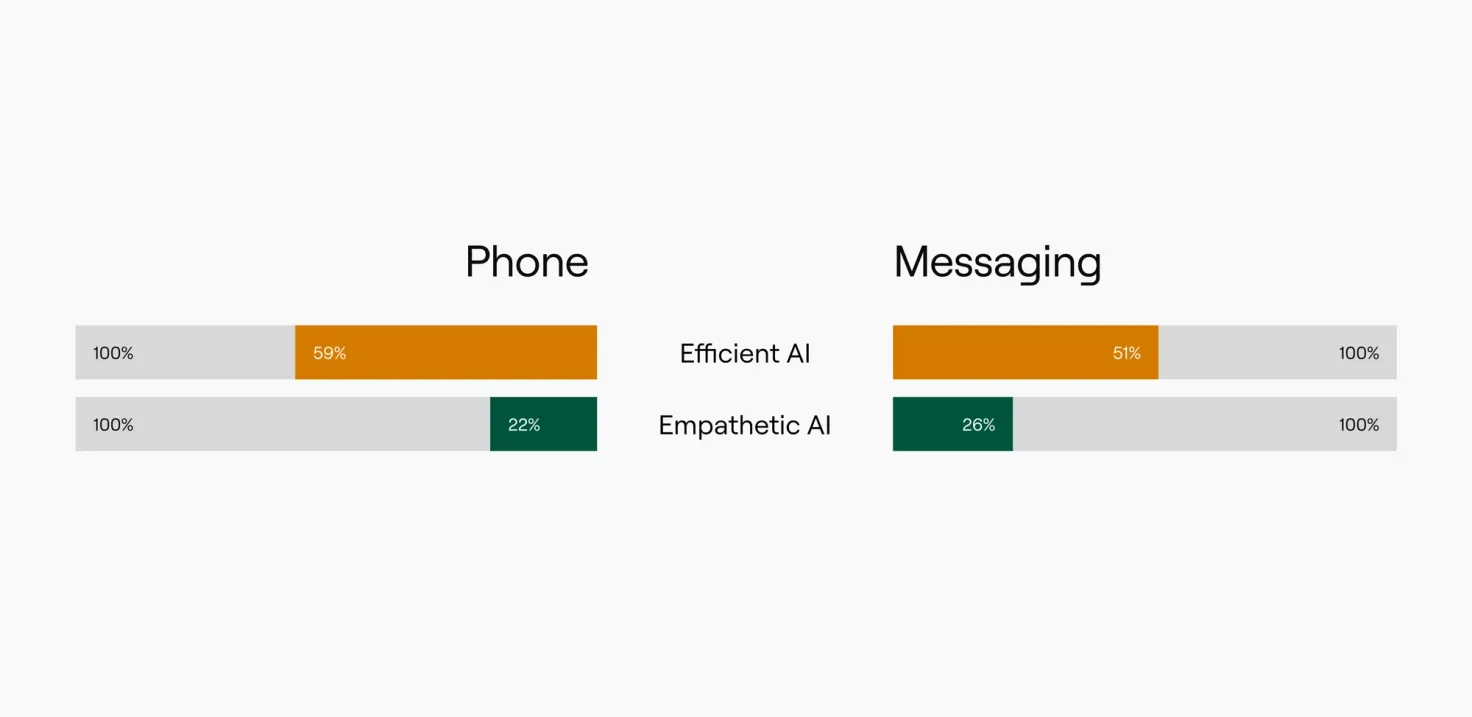

- 59% of consumers prefer instant, always-on AI over waiting for a human, but only when it can resolve their issue.

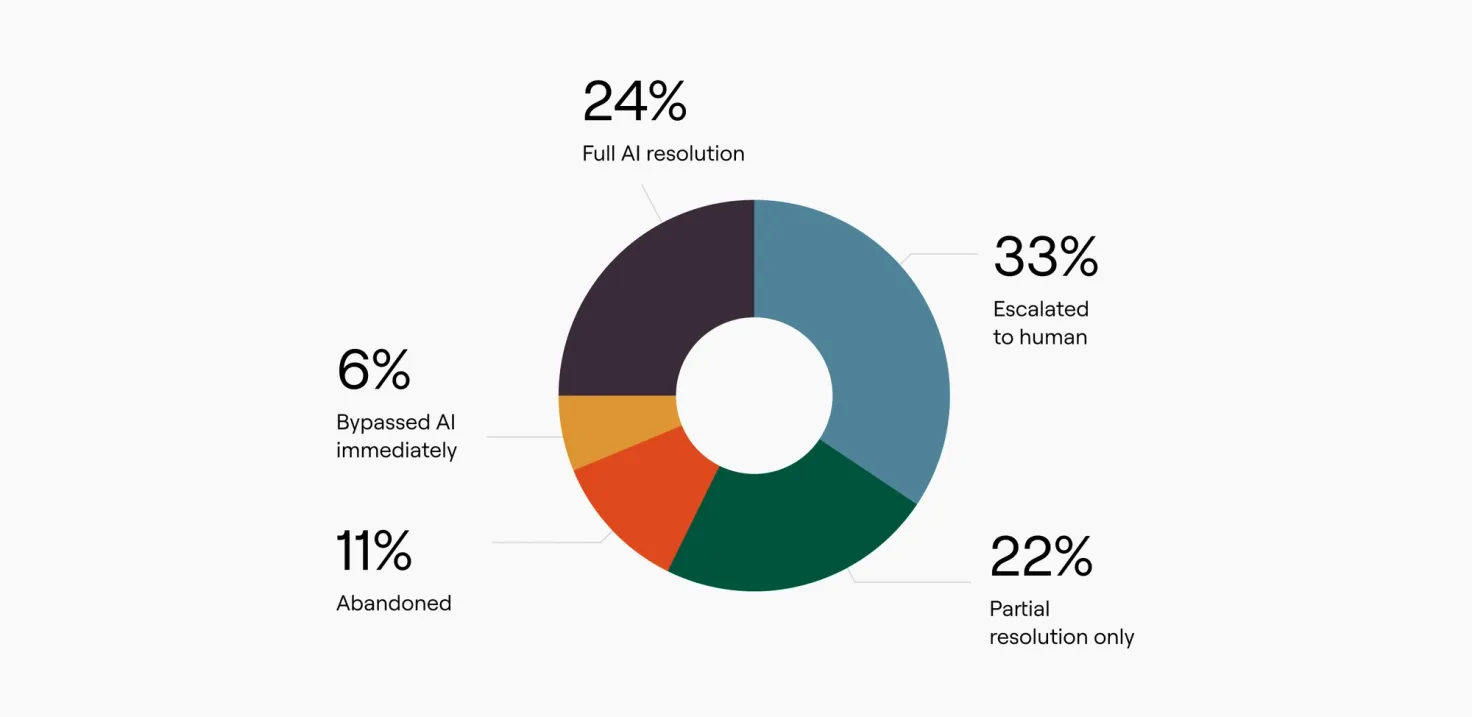

- Just 24% of consumers say their most recent interaction was fully resolved by AI alone.

- Yet 55% of businesses measure AI and human performance together, a dynamic that makes it structurally impossible to isolate how AI agents are actually performing.

There’s a natural progression in those numbers.

- Demand: Customers want immediacy. Waiting for support has become a baseline frustration, not a tradeoff they’re willing to accept.

- Delivery: Resolution is inconsistent, and most interactions still depend on escalation or intervention.

- Why the gap persists: When AI and human performance are blended together, it becomes difficult to see what’s actually working and what isn’t.

Taken together, this is the current state of AI customer service: strong demand, uneven execution, and limited visibility into performance.

What customers actually value

A lot of energy has gone into making AI feel more human. It’s an understandable instinct. Customer experience has always been tied to tone, empathy, and communication.

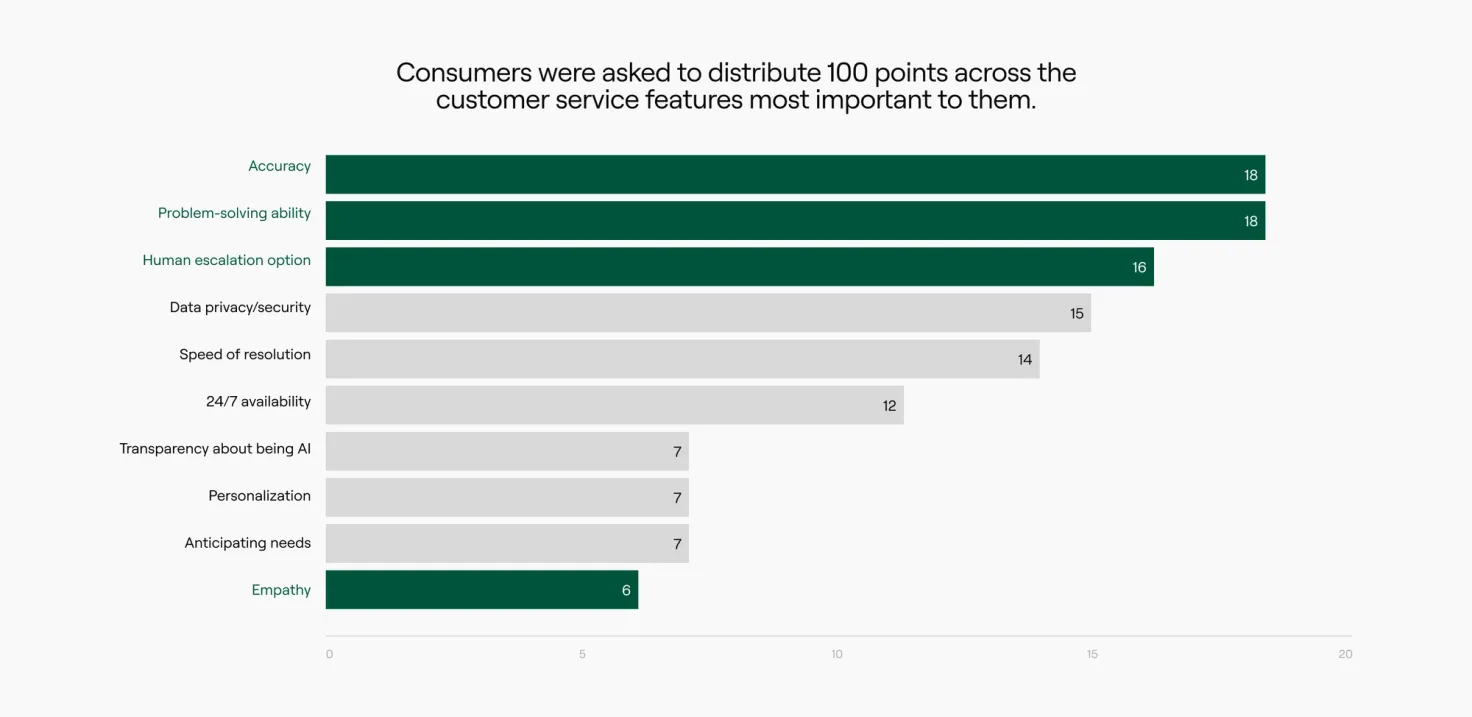

But when we asked consumers to prioritize what matters most in a service interaction, their answers were far more pragmatic:

- Problem-solving ability and accuracy came out on top.

- A clear path to a human followed closely behind.

- Empathy ranked last.

Agentic AI for customer service: Why accuracy beats empathy

Consumers ranked accuracy and problem-solving ability above empathy, highlighting a critical insight for enterprises adopting agentic AI for customer service: it’s not how human AI feels, but how effectively it resolves their issues.

This doesn’t mean empathy has no place. It means it doesn’t compensate for a lack of resolution.

We saw that play out clearly in testing. In a billing scenario, consumers were given two experiences: one that resolved the issue quickly and one that was more conversational but slower. The faster experience won by a wide margin.

Speed alone isn’t the point. Resolution is. Speed just makes that resolution visible.

Customers are evaluating whether the system can do the job. If it can, they’re comfortable with AI. If it can’t, the experience breaks down quickly.

Where experiences fail

The numbers on consumer satisfaction tell part of the story. Only 32% of consumers rate their most recent AI customer experience an 8 or higher out of 10. But satisfaction scores alone don't capture where things actually break.

When consumers describe bad AI customer service interactions, three failure patterns dominate:

- 74% said the AI failed to understand their question

- 56% hit a wall where the AI simply couldn't handle the complexity of the task

- 50% got the same unhelpful response repeated back to them in a loop

What these have in common isn't tone or personality. They're capability failures. The AI couldn't do the job.

The frustration that follows compounds in a specific way. When an AI handles the first part of a conversation well—collects context, acknowledges the issue, starts moving toward a resolution—and then stalls, it creates a particular kind of disappointment. Customers have already invested time. They've built up an expectation that help is coming. Then the system stops working.

That late-stage failure is worse than a faster one. A quick failure sends you to a human. A failure near the finish line leaves you stranded.

The business consequence shows up in a number that most dashboards never capture: 11% of consumers abandoned their interaction entirely. No escalation request. No follow-up attempt. Just exit.

In most organizations, that moment is invisible. But it represents something concrete: a customer who decided the service wasn't worth continuing to engage with.

57% of consumers say they would stop using a company's AI service entirely if they couldn't transfer to a human when needed. That's not a preference. It's a hard limit. Yet only 14% of businesses currently offer frictionless escalation at any point in an interaction.

The measurement problem underneath it all

If customers clearly value resolution, it raises a fair question: why aren’t more teams optimizing for it?

Part of the answer is structural.

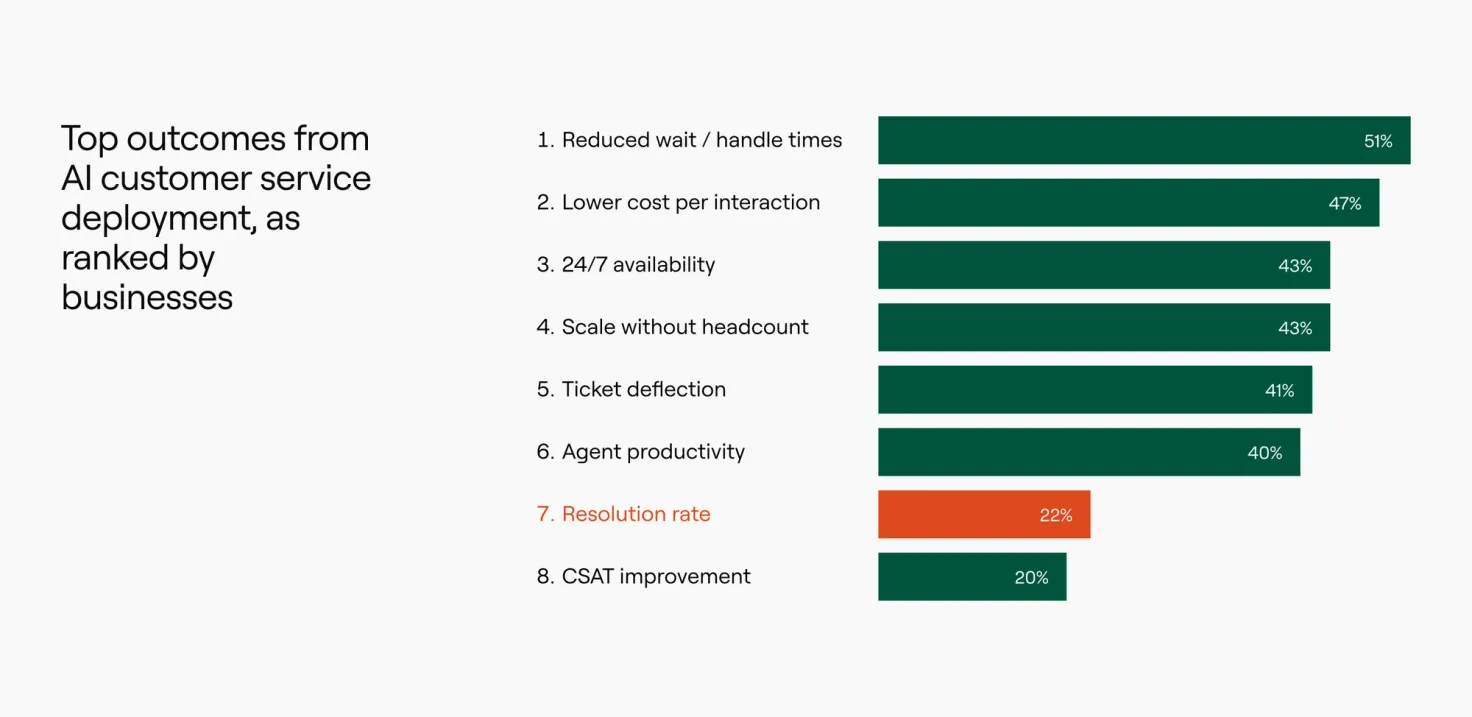

When we asked businesses what outcomes they prioritize from AI, the top responses were tied to efficiency. Reduced handle time, lower cost per interaction, ticket deflection, and the ability to scale without adding headcount.

Resolution ranked significantly lower. That doesn’t reflect a lack of awareness. It reflects what’s easiest to measure.

Efficiency metrics are immediate and visible. Resolution is harder to define and track, especially when multiple systems and agents are involved in a single interaction.

The fact that 55% of organizations measure AI and human interactions together compounds the issue. Without clear separation, it becomes difficult to understand where AI is succeeding, where it depends on human support, and where it fails entirely.

As a result, teams optimize for what they can see.

Containment becomes a proxy for success. Deflection becomes a proxy for efficiency. Neither answers the core question of whether the customer’s problem was actually solved.

This is where many AI programs plateau. They deliver initial gains, but struggle to improve meaningfully because the feedback loop is incomplete.

CX leaders guide: Understanding AI agent impact on company objectives

Hard work shouldn’t go unnoticed. This guide helps you clearly demonstrate ACX’s impact—so you can drive bigger investments, gain strategic influence, and position customer service as a key player in company growth.

Get the guideFrom deployment to operation

There’s a broader shift happening beneath these patterns.

Early AI adoption focused on deployment: getting a system live, handling basic interactions, proving that automation could work at scale.

That phase delivered value. It also created a new expectation.

92% of businesses surveyed expect to increase AI investment in customer service over the next 12 months. The mandate has shifted: it's no longer about getting AI deployed, it's about proving it works.

Once AI is part of the experience, customers begin to treat it like any other part of your service. They expect it to be reliable, accurate, and capable of handling increasingly complex tasks. At the same time, organizations are being asked to demonstrate more than efficiency gains. Leadership wants to understand the impact on retention, customer satisfaction, and long-term value.

This is where the conversation changes.

AI is no longer just a tool for reducing cost. It becomes part of how the business delivers and measures customer experience. And that requires a different level of operational discipline.

- Clear definitions of success.

- Separation of AI and human performance.

- Continuous improvement based on real interaction data.

- Alignment between CX metrics and business outcomes.

Without that structure, it’s difficult to move beyond incremental gains.

True enterprise customer experience excellence comes from treating AI as an ongoing operational capability. Agentic AI in customer experience now delivers increasingly complex tasks reliably, turning customer service into a strategic growth driver.

How to choose AI technology for enterprise customer service

This guide breaks down the seven essential categories every enterprise RFP should include and what to look for in each.

Get the guideWhat leading teams are doing differently

The organizations making progress here aren’t necessarily the ones with the most advanced models or the largest AI budgets. They are the ones building systems around those models.

They define resolution carefully and measure it consistently. They separate AI-driven interactions from human-assisted ones. They invest in improving performance over time, rather than treating deployment as the finish line.

They also recognize that capability expands gradually.

Simple use cases establish trust. More complex interactions follow as systems improve and governance becomes more robust. Over time, AI begins to handle tasks that previously required human intervention, not just faster, but with greater consistency.

This is where AI starts to influence outcomes that matter beyond the support function. Retention improves. Customer lifetime value increases. Service becomes a driver of growth rather than a cost center.

Those outcomes are not automatic. They come from treating AI as part of an operating model, not just a channel.

Where this leads

The data in this research points to a clear direction: Customers are ready for AI-led experiences, but only when those experiences work. They don't care how human AI sounds. They care whether it solves their problem.

The gap is no longer about adoption. It’s about execution, and the businesses that close it will set the pace for what AI in customer service looks like next.

If you want to go deeper on what a successful ACX operating model looks like in practice, we covered the full findings in our recent webinar with CMSWire. The discussion goes beyond the data to explore how leading teams are already moving past the 24% resolution benchmark: what they're measuring, how they're structuring AI performance separately from human performance, and where the biggest opportunities are right now.

Webinar: New research on AI for CX

Find out what consumers want, what enterprises prioritize, and where the gap is growing.

Watch on-demand