The UK Gambling Commission fined William Hill £19.2 million for social responsibility failings. Ontario's AGCO penalized theScore after a single player bet $2.5 million over eight months with no intervention. In New Jersey, Betway was fined for allowing self-excluded players to keep gambling past their own deposit caps.

Three regulators. Three markets. The same findings: proactive intervention at scale is hard to get right, even when operators are committed to it.

What stands out isn't a single point of failure. It's a pattern of inconsistent execution in moments where timing, context, and consistency matter most.

Responsible gaming now lives or dies in execution. When it falls short, the impact compounds quickly: regulatory exposure, player churn, and reputational damage.

Most gaming operators understand the stakes. Responsible gaming has become a boardroom priority, with dedicated teams, policies, and tooling behind it. The challenge is consistent execution across every channel, every market, and every moment—including the hardest ones.

Gaming companies are being evaluated on how well they identify and support players showing signs of distress, across every channel, at any hour, and in every market they serve. Delivering that level of consistency is difficult—and increasingly non-negotiable—without gaming AI customer service agents executing customer experience in real time, including during peak betting moments.

Operators are investing in responsible gaming, but execution at scale is the hard part

For years, responsible gaming was defined by access: self-exclusion pages, deposit limits, and links to support resources. Most operators met that standard.

The industry has responded. Operators have built programs, hired dedicated teams, and deployed tooling. But as regulatory expectations have shifted from passive tool provision to active, demonstrable harm prevention, the execution challenge has grown significantly.

Affordability checks are becoming more common. KYC requirements are tightening. Shared self-exclusion systems are expanding across markets. Real-time behavioral monitoring is becoming a standard expectation. Enforcement frameworks increasingly weigh repeat violations and prior compliance history.

At the same time, player behavior reinforces the need for this shift. Millions of customers are already using safer gambling tools, and operators are sending tens of millions of safety messages each year.

The expectation isn’t just to have safeguards, it’s to apply them consistently, in real-world conditions, across time zones, languages, channels, and moments of risk.

Where execution breaks down

Even operators with strong programs run into the same wall: traditional support models weren't built for this level of consistency. The impact shows up in player experience:

- A player reaches out in distress and receives a generic, scripted response.

- A promotional message is sent minutes after a deposit limit is set.

- A high-risk signal is missed during a high-volume shift, such as a live event or peak betting window.

Each of these moments erodes trust.

Responsible gaming interactions tend to happen at the most sensitive points in the player lifecycle. When they’re mishandled, players don’t just disengage, they leave with a clear perception of how the operator handled that moment. And that perception spreads.

Most operators don’t lack policy. They lack consistent execution.

Applying responsible gaming standards across channels, markets, languages, and time zones depends heavily on human agents operating under pressure. Even highly trained teams will vary in how they interpret signals, apply policy, and escalate conversations.

That variability isn’t a training issue. It’s a scaling issue.

How to choose AI technology for enterprise customer service

This guide breaks down the seven essential categories every enterprise RFP should include and what to look for in each.

Get the guideWhy traditional customer service models fall short

These requirements don’t align with how support operations are typically structured.

- Coverage depends on staffing.

- Signal detection depends on individual judgment.

- Consistency varies by agent, shift, and channel.

As volume increases, so does variability.

This is where many teams get stuck: expectations rise, but the underlying system delivering support hasn’t changed.

What consistent, sensitive gaming customer service actually requires

Delivering responsible gaming support at scale comes down to four capabilities working together:

- Always-on availability: Players need support when they need it, not when a team is staffed. That includes late-night interactions, peak events, and cross-market coverage.

- Real-time signal recognition: Risk doesn’t arrive as a single, obvious event. It shows up in patterns: deposit behavior, repeated requests, changes in tone. Identifying and responding to those signals in real time is critical.

- Consistent policy application: Every interaction should reflect the same standards, regardless of channel or agent. Inconsistent responses create both compliance risk and a fragmented player experience.

- Clear, context-rich escalation paths: When human intervention is needed, the transition should be seamless. Agents need full context to step in quickly and appropriately, without asking players to repeat themselves.

Most operators have elements of this in place. Few can deliver all four consistently, across every interaction.

How AI customer service agents improve responsible gaming

To meet today’s standard, customer service needs to do more than respond. It needs to interpret, decide, and act in real time.

AI customer service agents introduce a consistent, always-on layer of execution across the customer experience. They can:

- Recognize behavioral and conversational signals in real time.

- Respond with context and appropriate next steps.

- Apply policy consistently across every interaction.

- Escalate seamlessly when human support is required.

- Operate within strict compliance and data governance standards.

These capabilities need to work together in every interaction. When they do, operators can deliver the level of consistency regulators expect—and players notice.

How leading gaming companies are operationalizing this

For gaming operators, responsible gaming support doesn’t happen in controlled conditions. It happens across jurisdictions, languages, and peak betting windows, often when volumes are highest and signals are hardest to catch.

At that level, consistency isn’t just about coverage. It’s about how decisions are made, how policy is applied, and how performance improves over time.

That’s why leading teams have moved toward a more structured approach, treating AI customer service agents as part of core operations with clear governance, shared standards, and continuous improvement built in. A centralized AI customer service platform enables operators to more easily manage compliance, scale interventions, and consistently apply policy across every interaction.

Agentic customer experience (ACX) reflects that shift, and it's already changing how leading operators run responsible gaming at scale.

The distinction matters. Most operators have AI customer service in some form. ACX is what happens when those capabilities are connected into a governed operating model with clear ownership, shared standards, and continuous improvement built in across every market, channel, and interaction.

For responsible gaming, that shift has a direct impact on how support is actually delivered. Interventions are no longer dependent on a specific agent being available and catching a signal. Policy updates—a new regulatory requirement, a revised escalation threshold, a change in market standards—apply across every channel immediately, not through a retraining cycle that takes weeks and still leaves gaps. And because performance is measured continuously, operators can identify where execution is breaking down before a regulator does.

That's what delivering responsible gaming at scale actually requires: not more AI tools, but a system designed to hold the standard consistently—across every jurisdiction, every language, and every betting window—and improve over time.

At that point, AI agents aren't just assisting support. They're the operating layer through which responsible gaming commitments become player experience.

What this looks like in practice

Within that structure, responsible gaming support starts to operate differently in a few key ways:

Recognizing risk as it emerges

Signals don’t arrive labeled. A player making repeated deposits, asking to reverse a limit, or shifting tone mid-conversation requires interpretation in context.

AI agents evaluate both behavioral and conversational signals in real time, enabling earlier, more context-aware responses before situations escalate.

Applying policy consistently, every time

Regulators don’t evaluate intent, they evaluate outcomes.

Responsible gaming policies need to be applied the same way across every interaction. This means translating policy into clear, repeatable workflows, so interventions, messaging, and escalation paths are executed consistently across markets, channels, and moments.

Maintaining continuity during escalation

Some situations require human judgment. When they do, continuity matters.

AI agents pass full conversation history and relevant signals to human agents, so players don’t have to repeat themselves, and teams can step in with the right context immediately.

Operating within strict compliance boundaries

Responsible gaming interactions often involve sensitive personal and behavioral data. Systems need built-in safeguards and clear governance, so operators can demonstrate how decisions are made and how data is handled, not just document policy.

Individually, these capabilities are valuable. In practice, they need to work together across every interaction, in every market.

That’s where a structured approach becomes critical: ensuring AI agents aren’t just deployed, but consistently governed, measured, and improved over time.

Responsible gaming is a competitive advantage

The operators leading on responsible gaming have stopped treating it as a cost center. They're treating it as a differentiator. The results are showing up in player retention, not just compliance records.

That framing is already starting to break down.

When support is consistent, timely, and context-aware—especially in high-risk moments—it changes how players engage over time. Players who use responsible gaming tools tend to stay active longer and behave more predictably. The experience around those tools determines whether they’re used—and whether they’re effective.

What separates operators isn’t whether they offer safeguards. It’s how well those safeguards are delivered in practice.

That’s where the gap is widening.

Operators relying on manual processes will continue to see inconsistency across markets, channels, and moments that matter most. Operators building a structured, AI-driven approach to customer experience will be able to deliver the consistency regulators expect and players notice.

Agentic CX in 2026: What consumers expect and most enterprises miss

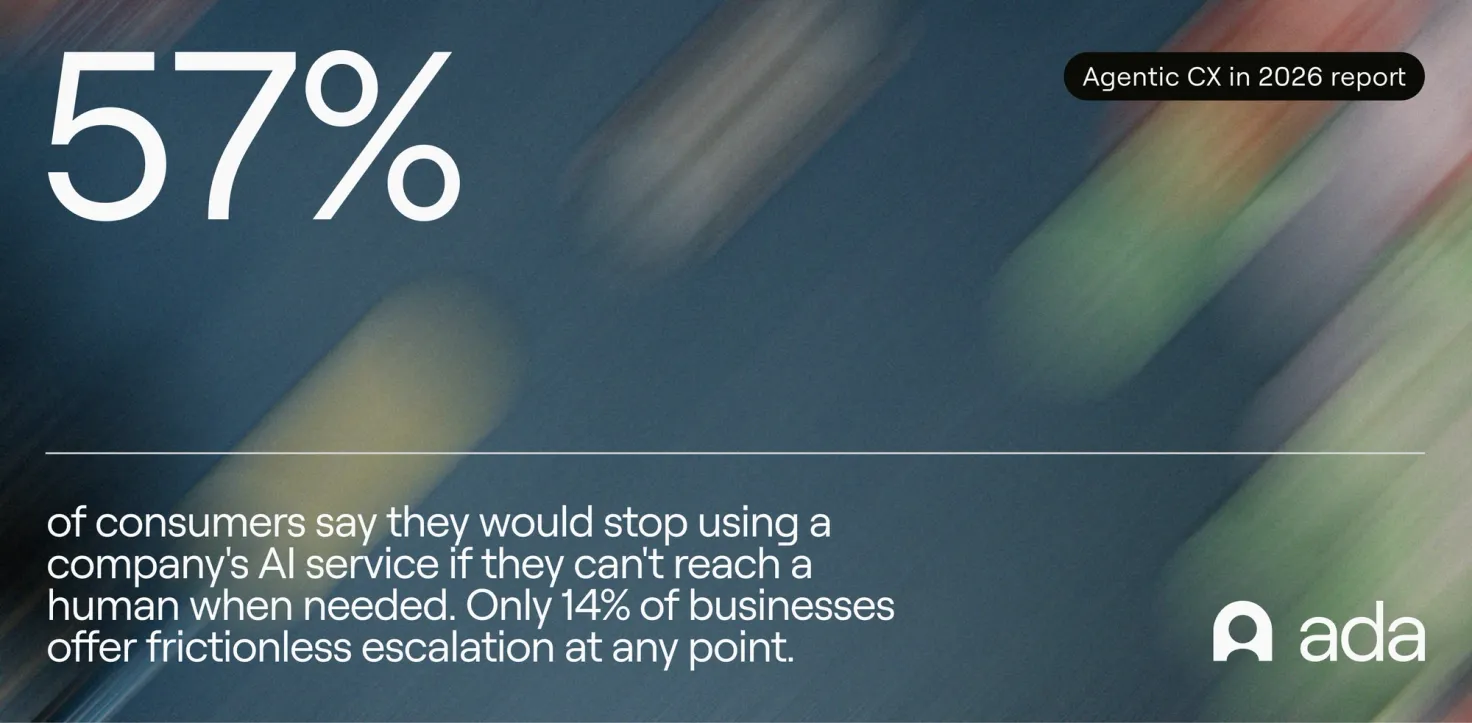

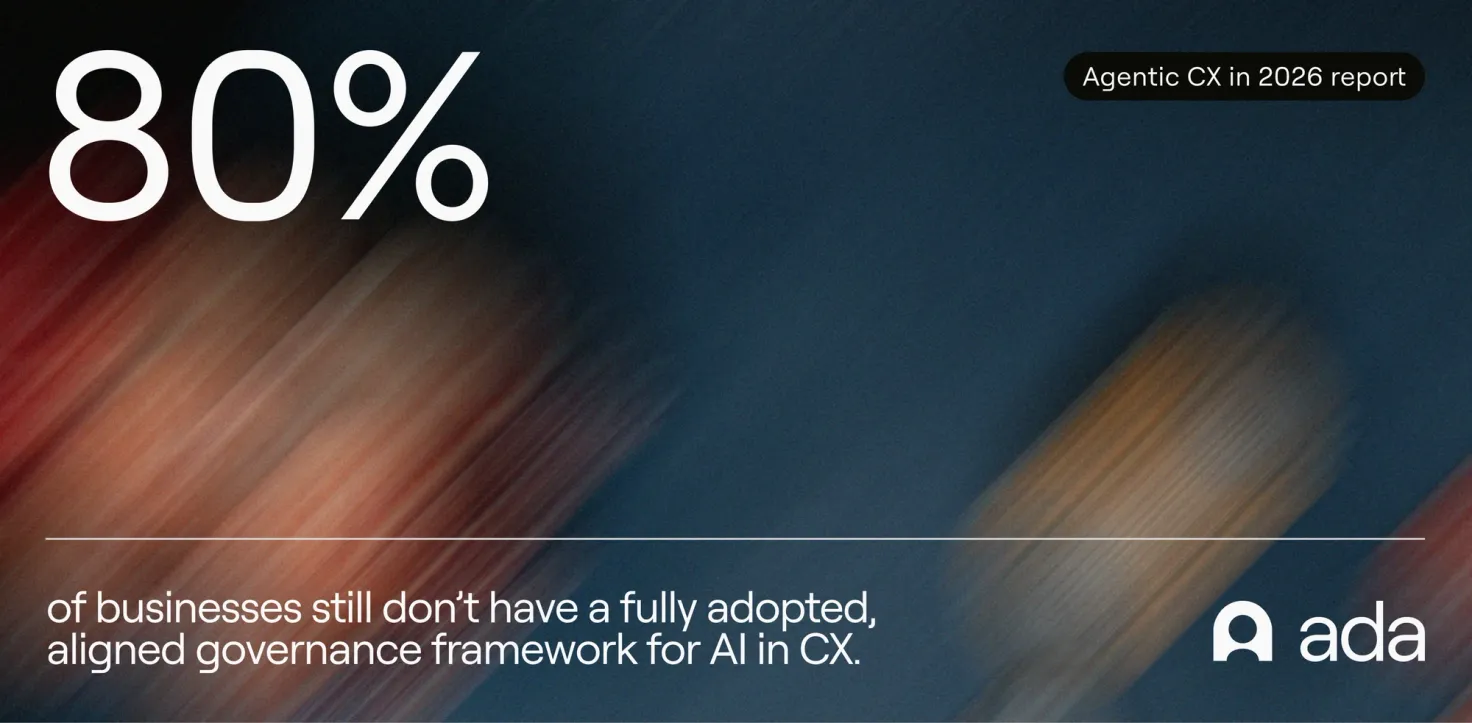

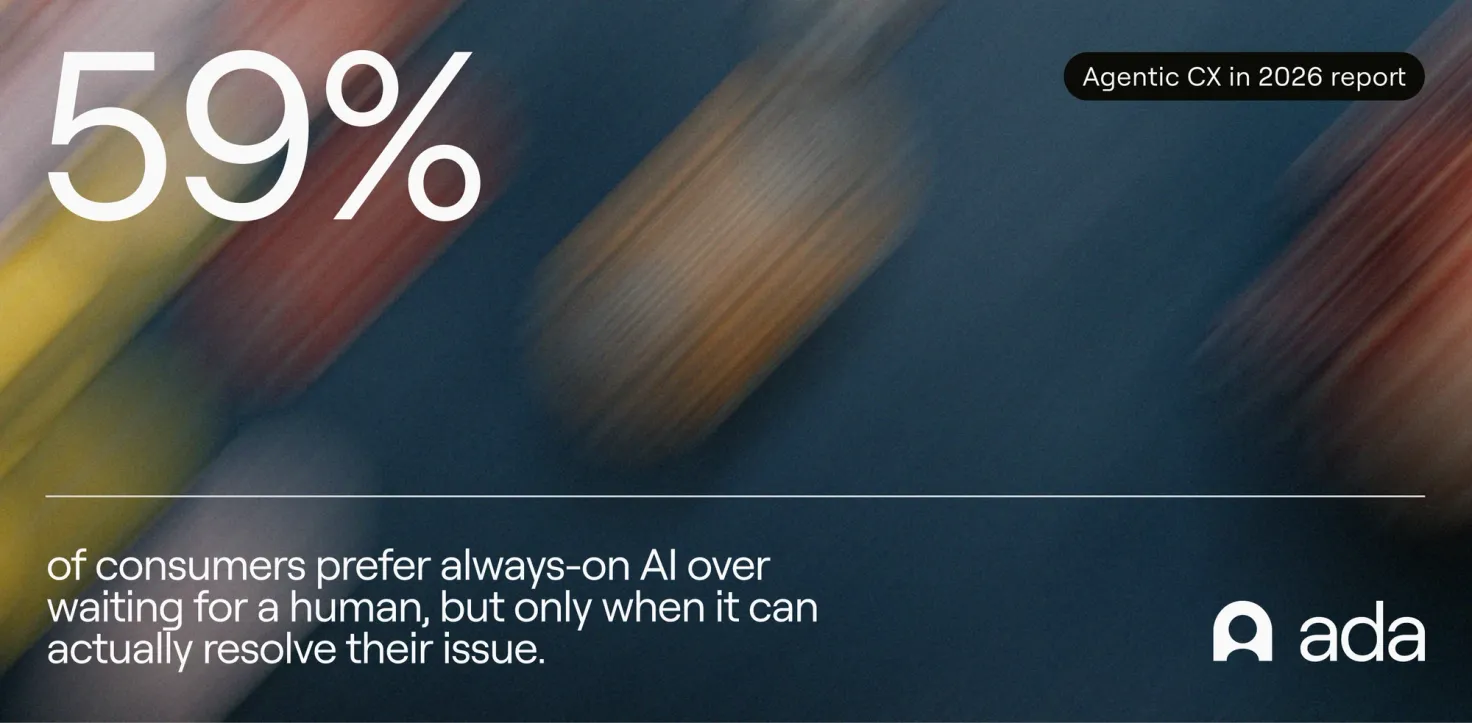

There’s a common assumption that consumers are skeptical of AI in customer service. The data says otherwise. Our 2026 report surveyed 2,000 consumers to understand how people actually experience AI in customer service today.

See reportBuild a foundation that can keep pace

Regulatory expectations will keep rising—so will player expectations. The operators best positioned for what's next are the ones building infrastructure that improves over time.

Meeting those expectations consistently requires more than incremental improvements. It requires a system designed for real-time decision-making, consistent execution, and continuous improvement.

That’s what an operating model enables.

For gaming companies, the path forward is clear: move beyond isolated tools and build a system that connects people, processes, and technology, so responsible gaming support improves over time, rather than becoming harder to maintain at scale.

Create unforgettable player experiences

Deliver effortless AI customer service that keeps players engaged, loyal, and ready to play.

Learn more