Today, every company is working on incorporating AI into its ethos. We made this prediction a few years ago, and sure enough, we were right.

For customer service, this means transitioning from an agent-first to an AI-first model, where the nucleus of the service organization is the AI and leaders build around it. Your customers are expecting this too. Zendesk’s research shows that 73% of consumers expect more interactions with AI in their daily life, and 74% believe AI will improve customer service efficiency.

In a few years, AI and automation will supplant human agents almost entirely, and human customer service agents will instead be elevated to a more strategic and important role in the company. This foundational shift is naturally accompanied by a shift in how we measure success. Agent-first metrics will continue to be relevant — albeit adapted to a new paradigm — but a new metric will become the North Star for customer support: Automated Resolutions (AR).

Automated Resolutions are conversations between a customer and a company that are safe, accurate, relevant, and don’t involve a human.

As you embark on becoming an AI-first customer service organization, it’s crucial to ensure that you are onboarding solutions from AI-native companies; companies who are not just selling AI software, but employing it themselves internally in day-to-day processes across the entire organization. This is the kind of dedication that ensures the product is truly purpose built for an AI-first world.

To demonstrate this concept, I’m going to dive into how we built Ada’s AI Agent to optimize for AR. When a customer makes an inquiry and the AI Agent generates an answer, Ada runs processes to ensure that the generation is safe, accurate, and relevant. Let’s dig into them.

safe

The first step is identifying whether the generated answer is safe. Safe means that the AI Agent interacts with the customer in a respectful manner and avoids engaging in topics that cause danger or harm.

Ada currently incorporates several measures to achieve this, including OpenAI’s safety tools .

accurate and relevant

The next step is ensuring that the generated answer is accurate and revelant. Accurate means that the AI Agent provides correct, up-to-date information with respect to the company’s knowledge and policies, as well as invokes the correct APIs to fulfill the customer’s request and provide the correct output data (e.g. order details, account information, etc).

Relevant means that the AI Agent effectively understands the customer’s inquiry, and provides directly-related information or assistance. Without this, the AI Agent might be only generating truthful facts that are unrelated to the customer’s inquiry, and doesn't really resolve anything.

knowledge base generation

When thinking about generative AI for customer support, you have to consider the knowledge sources that the AI Agent is using to generate answers. When AI is powered by publicly available LLMs, it distills information from all over the internet.

While this is more or less okay for general use, it becomes much riskier for customer support, especially if it gives customers answers that are offensive or factually incorrect. Depending on the severity of the misinformation, your company can become liable for legal or financial reparations.

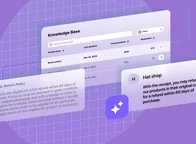

For Ada’s generative capabilities, we’ve limited the knowledge source that the AI distills information from to only the company’s first-party support documentation. By training the AI to use only the company’s knowledge base and other internal data, we can ensure that the generated answer is grounded on the company's official documents and therefore mitigate a lot of risk.

When the AI generates an answer, Ada uses text embeddings and an Elasticsearch index to search for and retrieve the most relevant documents from the company’s knowledge base that potentially contain the information needed.

Then Ada gets to work using an ensemble of methods to make sure that the generation is grounded on the documents that are retrieved from the Elasticsearch, primarily a BERT-based natural language inference model and a fine-tuned LLM.

- The BERT-based natural language inference model is trained on pairs of sentences that have a binary flag indicating whether sentence B is logically entailed from sentence A. Ada runs the model on each of the documents and the generated answer in order to determine the probability that the generation is logically entailed from any of these documents.

- Ada also consults a fine-tuned LLM for the same goal and finally combines the outputs to probabilities which determine the confidence level in the accuracy of the response.

actions

Another dimension to accuracy is with respect to Ada invoking (read: taking) actions. Enabling your AI Agent to invoke actions goes a long way to increasing Automated Resolutions; the AI Agent can now look up an order number, retrieve flight information, upgrade an account, etc…

As with response generation, there is a risk of hallucination when LLMs are given the ability to invoke actions, such as:

- The LLM may generate a response that implies that an action was invoked without actually invoking it

- The LLM may send wrong customer information to actions

- The LLM may return wrong values from an action’s result

Ada reduces the chance of hallucinations by separating the reasoning stage from the execution phase. By following this phased structure, the AI Agent has a higher degree of scrutiny before invoking actions and it can narrow down the decisions it has to make — enabling it to focus on executing the action with minimal hallucinations.

guidance and rules

Two major features that help keeping generations compliant with company’s policies, and thus relevant, are guidance and rules.

Guidance allows companies to add dynamic instructions for the LLM to follow. You can think of them as ways to direct the LLM towards specific behaviors.

You might want to use guidance when:

- There are instructions that are relevant across multiple knowledge base articles. Example: The AI Agent responds with a link to a ticketing system if the customer is experiencing technical issues.

- There are instructions that the AI Agent wouldn’t be able to find in the knowledge base. Example: The AI Agent sends a special offer if the customer shows interest in a certain product.

- There are instructions that you don’t want to add into knowledge base articles for whatever reason. Example: The AI Agent can answer questions about limited time promotions or temporary outages.

You can also add guidance for more general aspects of the AI Agent, such as conversational style, tone of voice, special instructions for hand offs, and more.

While guidance provides the AI Agent general directions, rules allow you to ensure that specific knowledge, guidance, and actions are only made available to subsets of your customers.

You might want to use rules when:

- There are certain knowledge base articles that are relevant to a specific subset of customers. Example: The AI Agent offers different answers based on the customer’s geographic location.

- You want to ensure that the AI Agent’s actions align with company policies. Example: Your company has a policy that only customers who made a purchase within the past 10 days are eligible for refunds. You can use a rule to ensure that the AI Agent’s Refund action is only made available for selection when customers meet that criteria.

- You want the AI Agent to behave in specific ways depending on who they’re chatting with. Example: Guiding the AI Agent to address customers as “members” if they have a subscription.

key AI customer service metrics leaders need to be tracking

This guide includes all the information you need to know about tracking and measuring customer service success in an AI-first organization.

Download guide